I’ve been building web sites and generating masses of free Google search engine traffic for over 10 years, in that time I’ve built the best WordPress SEO Theme available online and made tons of money, yet I’ve never generated or used a Google XML Sitemap.

When I decided to write this part of the WordPress SEO tutorial since I’ve never used an XML sitemap and have generated tens of millions of search engine visits to my own sites I was entrenched in my SEO view Google XML sitemaps are not only not required, they are a hindrance to SEO analysis.

After researching what others think about XML sitemaps I’m not so sure?

Rand Fishkin for example advises using XML sitemaps: Rand Fishkin is the founder of SEOMoz (now just Moz), he does tend to be on the ball SEO wise: there’s not many of them, most so called SEO experts don’t have a clue :-(

Why I Do NOT Generate a Google XML Sitemap

For as long as I can remember Google found and ranked web pages based on links, generally speaking if Google couldn’t find a web page via a backlink (internal or incoming) it was pointless submitting the URL to Google via it’s free submission service or via an XML sitemap since it won’t generate traffic: the goal of good SEM isn’t to get a million pages spidered by Googlebot and indexed in Google, it’s to persuade Google to send a million visitors to your site. Indexed pages is not the same as search engine visitors.

All my current websites were either built manually by me or are running under WordPress with the WordPress SEO theme (Stallion Responsive) I develop. I know precisely how Googlebot will find my sites and spider through the sites link structure. There are no URLs on any of my sites that I want indexing in Google that Googlebot can’t easily find without a sitemap. So for my sites I don’t need to submit a Google XML sitemap for Google to FIND a particular URL.

That in itself is not a good reason for not creating and submitting an XML sitemap.

My good reason is I want to know if an important part of a site isn’t indexed in Google because of a lack of link benefit flowing to that section of the site (Google can find the URL through links, but there isn’t enough links for Googlebot to regularly visit) or for another reason and using sitemaps just to get a URL indexed could distract from seeing backlink problems etc…

Basically if there isn’t enough backlinks to keep Googlebot spidering an important URL on a regular basis I want to know sooner rather than later so I can do something about it. If an entire site is regular spidered just because there’s a Google XML sitemap I’ve lost an important diagnostic SEO tool.

In my SEO experience there’s no long term benefit in Googlebot finding a URL quickly UNLESS the information is time sensitive.

If I were finding a URL I wanted indexing and ranking tended to not be spidered regularly I could add more links. Adding an XML sitemap would mean I’d loose this valuable SEO data.

Do XML Sitemaps Increase Google Traffic?

This is an important SEO question and one I’ll now need to test :-) so right now I don’t know for sure, but have seen XML sitemaps in the same category as search engine submissions, waste of time, yes Google will sends it’s spider to all pages of a site, but will posts Googlebot couldn’t find via links actually generate traffic?

I suspect not, but will have to devise a fair SEO test since none of my sites (over 130 domains worth) have inaccessible content I want indexed.

Google XML Sitemaps SEO Test

Thought of an SEO test, first some background on Stallion SEO Super Comments.

As I write this it’s 8th March 2014, although I’ve owned this domain for well over a year the first content was added in December 2013 (couple of posts), but the bulk of the content was added early February 2014, so most of this site went live over the past few weeks. Currently there’s around 30 WordPress posts and a few static pages, so a small site that is going to be easily spidered by Googlebot (easy to check if less than 50 pages are indexed).

Many of the posts on this site are not new, I moved them from other SEO relevant sites I own (4 or 5 of the SEO Tutorial articles for example) to consolidate WordPress SEO relevant content on one domain (this one).

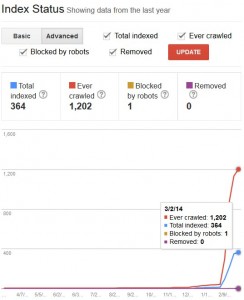

The moved posts came with over 300 comments and since I use the SEO Super Comments Stallion feature a lot of them have been spidered and indexed by Googlebot. You’ll note the discrepancy between ever crawled (over 1,000) and indexed (364), some of that is because I also moved comments around between posts (used move comments WordPress plugins), so for a time Google would have found the same comments on two posts (Google will figure out they’ve been moved eventually :-)).

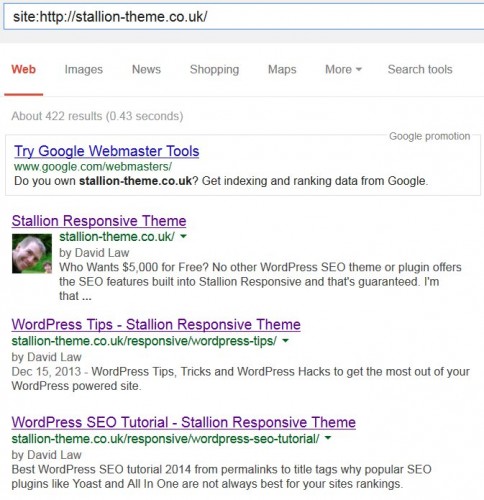

Use the site:domain search in Google to see what’s indexed on a domain:

site:https://stallion-theme.co.uk/

Right now Google has indexed 422 pages from this site, the number of pages isn’t always accurate (note the difference between a Google search and Webmaster Tools Index Status), some searches I see over 1,000 pages indexed which is not true. Still it’s a good guide for when you don’t own the domain, but want to see how much is indexed.

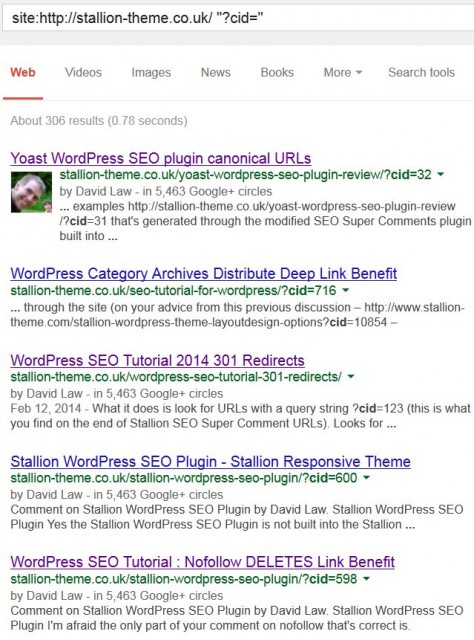

This means I have over 300 of the comments indexed in their own right as Stallion SEO Super Comments, although not a perfect way to view them all you can get an idea with this Google site search:

site:https://stallion-theme.co.uk/ "?cid="

This is searching this domain and looking for pages that include “?cid=” which is part of the URL of all Stallion SEO Super Comments. You can use this search format to look for pages indexed that cover specific keyphrases, so see how well you are covering a niche, try “Panda SEO” (88 results, covered well), “Hummingbird SEO” (1 result, need to work on that niche :-)).

It’s not perfect since this article will also show up (when indexed) since I’ve included “?cid=” in this body text.

I use the Stallion SEO Super Comments feature to get content indexed that might not warrant a post, with a comment you can be much less formal (heck of a lot quicker to run off a comment than a post) and of course my visitors comment and by changing the comments titles (another Stallion feature) I can target their comments to relevant SERPs. For example in the screenshot above you can see a comment indexed with the title:

WordPress SEO Tutorial : Nofollow DELETES Link Benefit

Read the comment Nofollow DELETES Link Benefit and you can see it’s informal, a really quick comment (quick for me anyway) that would have taken me 5-10 minutes to write. I wouldn’t write an SEO tutorial article just to make that one point (the XML sitemap article will take a couple of hours to write not including research), but by using the comments that are indexed I have long tail top 10 Google SERPs like:

Nofollow DELETES Link Benefit

Nofollow Link Benefit

I have hundreds of long tail SERPs like these for super SEO comments.

What Happened to the Google XML Sitemap SEO Test?

Now you know how the Stallion SEO Super Comments work you’ll note only largish comments generate a link to the super comment. If a comment is short, on this site I have it set to only add the link for comments with 400 or more characters in the comment body text the link isn’t added. So we have some shorter comments that do generate an SEO Super Comment (the URL exists for all comments, even single word comments), but they are NOT linked to from anywhere: I don’t want to waste link benefit on a “Great Article” comment.

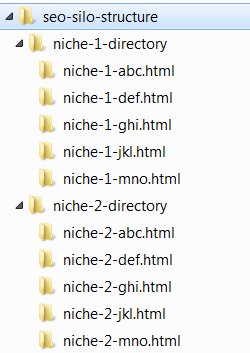

My XML sitemap SEO test will involve finding a way to generate a Google XML Sitemap including links to all SEO Super Comments including the short ones (they’ll be perfect for this SEO test), create some short comments targeting specific long tail keyword SERPs and see if ONLY having them found via an XML sitemap generates Google traffic.

That’s going to be fun to code :-(

In my SEO opinion if hard to find content (no backlinks, internal or otherwise) doesn’t generate search engine traffic there’s no point Google spidering and indexing it.

Will post the sitemap SEO test results when I have them (no time frame).

XML Sitemap SEO Test Update

Only took an hour or so to create a modified version of the Google XML Sitemaps WordPress Plugin (used V3.4) that outputs all Stallion SEO Super Comments URLs whether the comment is large or small. So on 8th March 2014 submitted an XML sitemap to Google.

XML sitemap URL: https://stallion-theme.co.uk/sitemap.xml

Currently there are 402 URLs, the URLs including “?cid=” are the URLs of interest, though not all lack internal links, so only the smaller comments aren’t indexed already. Will be interesting to see if the smaller comments rank for any of the SERPs their titles are targeted at: even though they are small comments I had already given all comments on this site an SEO’d comment title.

David Law

WordPress XML Sitemap

David:

I understand that Stallion adds a sitemap on a page within wordpress, but does it also create a sitemap.xml to submit to Google or do we need to add a plugin to do that? If so, what do you recommend?

Thanks for an awesome theme. I am really looking forward to 6.1… not to put any pressure on you of course;)

Google XML Sitemap SEO Value

There’s no built in Google sitemap.xml file with Stallion.

I haven’t added the option because it has little if any value in the real world and can make it harder to determine issues with spidering within a site.

All pages of a site should be spiderable by default, if Google can’t find a page through normal spidering you are doing something wrong that should be fixed. This problem can be masked by using an xml sitemap, the pages Google isn’t finding naturally can be found via the xml sitemap.

Google is not going to give a page decent rankings if it’s only found via an xml sitemap, so there’s nothing to gain SEO wise having pages indexed Google isn’t finding naturally.

That being said there’s plenty of free Google xml sitemap plugins in the WordPress plugin repository, never used one so no idea which is best.

Stallion 6.1 update is going extremely well, added another feature of a featured post slideshow for the home/archive pages with loads of different image effects (images fading in/out sort of stuff). Basically select up to 20 posts to add to the slideshow and it shows an image with a short excerpt of each post in a slideshow. Still planning to release this month, the slideshow was the last major feature I wanted to add for 6.1.

David

Google XML Sitemap SEO Value

WordPress SEO Test XML Sitemaps Google Traffic Generation

As mentioned in the main Google XML Sitemaps article I’ve submitted my first XML sitemap to Google for this site.

There are URLs on this site with content (not much) that have no backlinks (internal or otherwise), Google doesn’t know they exist (at least not from a link). The XML sitemap should be the only way for Google to find all the URLs.

I have my doubts, I can see Googlebot spidering the content via the sitemap on a regular basis, so might keep it indexed long term, but I highly doubt the content will rank for anything worthwhile.

Easiest way to run an SEO test is remove as many variables as possible, so I obviously can’t link to any of the Stallion SEO Super comments URLs that are too small to automatically generate a link. there’s going to be plenty of URLs to play with since comments that are below 400 characters lack the links,

“?cid=926” : Comment Title – “AdSense Cheating isn’t Illegal”

If you search Google for the title you’ll see the main article for the comment is on is ranking in Google (not because of the XML sitemap) at https://stallion-theme.co.uk/adsense-click-exchange-how-to-cheat-google-adsense/comment-page-2/#comment-926. That tells me it’s an easy long tail SERP and will be interesting to see what happens when the Google spiders and indexes the Super Comment URL.

Will the main article still be ranked for the above SERP, or will the Super Comment URL with no backlinks be ranked? We shall see.

Next we have a short comment I’ve just changed the comment title to, so we have a unique long tail SERP.

“?cid=915” : Comment Title – “Legit Ways to Make Money from AdSense”

If you do the exact search in Google (exact search means surround by “Speech Marks”) right now there are no exact matches. After Google indexed this comment and the comment I just changed the title on there will be at least 4 pages using the above text. The comment without a backlink will use the above text as the title tag, so if Google isn’t taking the links into account you would expect the URL with the exact match title tag to rank first.

That will do for now, will think up a few long tail SERP tests and add them to the comments here as well (new comments).

David Law

WordPress SEO Test XML Sitemaps Google Traffic Generation

WordPress Google XML Sitemaps SEO Test Results

Google has used the XML sitemap I generated with the Stallion Responsive SEO Super Comments URLs included and the “Legit Ways….” test has been indexed and ranked in Google.

This is early results (hours into the SEO test), so not a lot can be concluded beyond Google will use an XML sitemap to index pages that lack links (we already knew that) and it will in the short term rank them (that’s not surprising – it’s what happens long term that’s important).

I’m avoiding using the comment title here so this linked comment doesn’t compete for the SERP.

The test SERP is long tail and unlikely to generate much if any traffic, but it is higher than I’d expect, the URL has no backlinks so it’s not costing this site any link benefit to be indexed or ranked.

Looking forward to seeing the long term SEO test results.

David Law

WordPress Google XML Sitemaps SEO Test Results

Free Unlimited XML Sitemap Generator Test

This is an SEO test for the Free Unlimited XML Sitemap Generator Google search.

Apparently there are sites that can generate an XML site map just from a URL. Cool.

Basically you enter your URL in a form and the sites generate a Google XML sitemap.

The SEO test is to determine if this comment without a link can rank for the SERP Free Unlimited XML Sitemap Generator.

David Law

Free Unlimited XML Sitemap Generator Test

Google XML Sitemap SEO Test Result

I’ve given the SEO test over 6 weeks to run and the results aren’t very good.

Although pages on this site are found for the test SERP “Free Unlimited XML Sitemap Generator Test”, it’s not the unlinked comment that’s ranking, it’s the main post.

Only when you do an exact Google search (within “speech marks”) does the unlinked test comment rank number one in Google.

This exact search with speech marks: “Free Unlimited XML Sitemap Generator Test”

Right now (May 31st 2014) there are 60+ results, all of them from this site (no other website uses that exact phrase).

Now compare to the standard Google search without the speech marks. Google lists just under 10,000 pages found (remember no site other than this one uses the exact phrase) and the first listing from this site is the main Google XML Sitemaps article (which is linked multiple times), not the unlinked comment. The main article is listed in Google at around 13th, which isn’t a great result and will be based on the body text within the content (these comments use the phrase multiple times).

This tells us Google is spidering and indexing the unlinked page (that’s an interesting SEO test result), but Google doesn’t appear to rank it particularly well for a standard Google search, I checked the top 100 results and didn’t find the unlinked comment: so it’s indexed, but not ranked for that SERP even though the title tag is an exact match (should be the best match from this domain).

As it is right now I don’t see much SEO value in having Google spider/index unlinked content via an XML sitemap, but then I don’t see any SEO harm in it either (I have the modified XML sitemap plugin running, so won’t disable it now).

The unlinked pages are unique content that isn’t costing me any PR/link benefit for Google to spider/index and long term other websites might link to these unlinked pages passing link benefit to the rest of the site. You also gain the tiny amount of intrinsic PR built into a web page for ‘free’, so overall might be helping the entire site SEO wise a very small amount.

David Law

Google XML Sitemap SEO Test Result