Comment on Cloak Affiliate Links Tutorial by SEO Dave.

Generally speaking most entries within a robots.txt file are a waste of time. The default action is allow spidering, telling a bot it can spider something it would have spidered anyway, is a waste of time.

Most directories and files you added to your robots.txt file is a waste of time and one is potentially damaging:

Do you use the default setup of having images under /wp-content/ ? If so you are blocking their spidering via

User-agent: *

Disallow: /wp-content/

You allow spidering of the uploads folder to Googlebot, but what about Bing and other search engines?

If you ever add a stand alone file with extension .php, won’t be spidered.

Personally as a user with about 70 WordPress sites I don’t really use a robots.txt file, mine includes this:

User-Agent: *

Crawl-Delay: 20

Which slows down spidering for some bots (Googlebot ignores it). I’ve had problems with too many bots spidering the hell out of my sites and it causing resource issues on the server, if a few spiders slow their crawl rate might help a bit. Only use if you have an issue (I have a lot of links to my sites which means spiders pretty much take up permanent residence on my servers).

That’s the entire contents of 95% of my sites (100 domains) robots.txt files.

If some admin files are being indexed or you want other parts of a site not indexed without wasting link benefit see Stallion WordPress SEO Plugin.

David

More Comments by SEO Dave

WordPress Cloak Affiliate Links

WordPress Affiliate Link Cloaking

I think you’ve misunderstood the Stallion link cloaking feature which is turned on under “SEO Advanced Options” : “Cloak Affiliate Links ON”.

If you have a WordPress site which links to affiliate products you don’t really want to waste valuable link …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

Affiliate Link Cloaking

Checked your website and the cloak script appears to be working as expected on the affiliate links with rel=”nofollow” attributes added by WPRobot.

The links that lack nofollow attributes won’t be converted to cloaked links. This will happen if a WPRobot …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

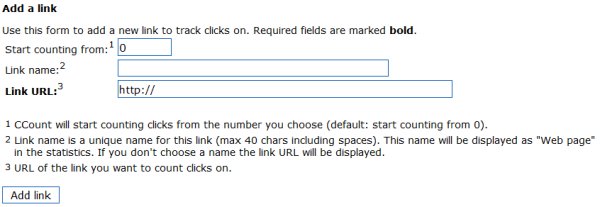

Tracking Links WordPress Plugin

The script I mention above counts the number of clicks, but it wouldn’t provide that information to your customer or track conversions.

Beyond the above Stallion doesn’t track clicks etc… I’d look for a WordPress tracking links plugin. I doubt you’ll …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

Cloak Affiliate Links Guide

I can confirm the Stallion cloaked links are setup correctly. They are meant to look and act like normal text links until you view source.

The way to check is to view source, I tend to use Firefox for browsing and …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

Cloaking Affiliate Links Tutorial

Sounds like you’ve made a mistake in the code, no idea what without seeing the code used in the test.js file and a link.

URL to the site this is on?

David …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

Which Links Should I Cloak?

The short answer is cloak any links you don’t want to send SEO benefit to.

For me that includes all affiliate links.

Does not include any links to my own sites (unless there’s a reason I don’t want two of my sites …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

Breaking the Link Cloaking Script

After posting the comment above had a thought.

If you aren’t using the Stallion link cloaking script for anything else you could break it so the Stallion affiliate link code isn’t converted to a clickable link by the javascript.

The result would …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

Automated Link Cloaking Script

Currently the Stallion theme has an extra options page (under the Massive Passive Profits menu : SEO) that cloaks all links the Massive Passive Profits plugin creates.

What this does is stop link benefit from being wasted through affiliate links etc… …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

Clickbank Ads Use Javascript Links

Like the AdSense ads, and Chitika ads, Clickbank uses javascript to serve it’s ads, so they are already ‘hidden’ from search engines.

If an ads built using javascript in a way that there isn’t a URL shown in the code (view …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

SEO Cloaking Affiliate Links

If you ask a question in a comment which I respond to and you don’t get it, but weeks later ask the same question on another page (or another site of mine) do you expect a different answer?

I’m not counting …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

Cloaked Affiliate Links Tutorial

It’s normal to see the URL when you hover over the Stallion Cloaked links.

If you’ve used the span type code described in the Stallion Theme Cloak Affiliate Links Tutorial above and it looks like the code above (when you edit …

Continue Reading WordPress Cloak Links

WordPress Cloak Affiliate Links

Hide URL of Link in Status Bar

You should see whatever is in the id=”” part of the link code, so if a redirected URL you see the redirect URL in the status bar. So it’s working.

There is a way to hide a link destination in the …

Continue Reading WordPress Cloak Links