Google Panda Update article update August 2014, but the main content is relevant to 2011 during those Google Panda updates.

As an SEO expert I’ve spent over 10 years reading what webmasters speculate Google is doing and what Google has done during it’s algorithm updates and invariable most are wrong. Nothing has really changed (nothing ground breaking anyway) with the latest Google Panda algorithm updates (2011 updates), just more of the same move towards a higher quality search engine index, yet there’s so much SEO misinformation out there in forums etc…

Google Algorithm Update Impact

I’ll give an example of a Google update from the past (2004), that resulted in the term the Google Sandbox Effect. At the time and even now many webmasters believe Google changed it’s algorithm to penalize/sandbox new websites after an initial boost in the SERPs (a ranking test period).

This made no sense, why would Google give a boost to a new site and take it away putting the site in the Google sandbox? That’s open to abuse, create a new site, make some cash and move on to the next new domain.

What I believe happened (based on numerous SEO tests and data: still believe this in 2014) was Google’s new algorithm had delayed the SEO benefit of new backlinks, this meant you could create a site today, immediately add enough backlinks to rank high, but those links won’t pass full SEO benefit for about a year: used to be under 3 months and that time frame could be put down to how long it took Google to index and compute rankings back then.

This makes a lot of sense for Google’s aim to only rank high quality sites high and matches the data available.

Google Quality Search Engine Rankings

Comment, forum and guestbook type link spammers links would take a year to pass full SEO benefit (about 6 months for any serious SEO benefit) and not only is there a tendency for that type of link to loose value over time (deeper content tends to get less link benefit), but also the site owners of the link spammed sites have time to remove the links and of course Google has time to penalize the blackhat SEO link spammers sites: spammed links didn’t work as well, so the spammers need more spammed links making it a bigger blackhat SEO footprint for Google to track.

Text link buyers were also hit hard, before the algorithm change you could buy text link ads that took less than three months to show SEO value, now wait over 6 months before you see any serious SEO benefit and about a year for full SEO benefit: how many small businesses can afford to wait 6-12 months for link benefit while paying a monthly fee!

Update 2014: Over the years the price for buying backlinks has fallen dramatically, used to cost a lot of money for links from modest PR pages, now they are significantly cheap. That’s market forces for you, buying backlinks stopped working as quickly, the price dropped.

The reason why it looked like the Google algorithm update resulted in a sandboxing of new sites was because newish sites were caught in the update. Before the update their backlinks were passing much more link benefit, after the update it was reduced significantly and Google rankings dropped accordingly. Sites with aged backlinks were not only unaffected directly, but benefited from less competition from newer sites.

The above SEO hypothesis makes sense and my SEO tests indicate this is close to what occurred.

Google Panda Update and High Quality Sites

Google have given us a list of what they are looking for in a site in 2011 and beyond…

http://googlewebmastercentral.blogspot.com/2011/05/more-guidance-on-building-high-quality.html

More guidance on building high-quality sites

Friday, May 06, 2011

- Would you trust the information presented in this article?

- Is this article written by an expert or enthusiast who knows the topic well, or is it more shallow in nature?

- Does the site have duplicate, overlapping, or redundant articles on the same or similar topics with slightly different keyword variations?

- Would you be comfortable giving your credit card information to this site?

- Does this article have spelling, stylistic, or factual errors?

- Are the topics driven by genuine interests of readers of the site, or does the site generate content by attempting to guess what might rank well in search engines?

- Does the article provide original content or information, original reporting, original research, or original analysis?

- Does the page provide substantial value when compared to other pages in search results?

- How much quality control is done on content?

- Does the article describe both sides of a story?

- Is the site a recognized authority on its topic?

- Is the content mass-produced by or outsourced to a large number of creators, or spread across a large network of sites, so that individual pages or sites don’t get as much attention or care?

- Was the article edited well, or does it appear sloppy or hastily produced?

- For a health related query, would you trust information from this site?

- Would you recognize this site as an authoritative source when mentioned by name?

- Does this article provide a complete or comprehensive description of the topic?

- Does this article contain insightful analysis or interesting information that is beyond obvious?

- Is this the sort of page you’d want to bookmark, share with a friend, or recommend?

- Does this article have an excessive amount of ads that distract from or interfere with the main content?

- Would you expect to see this article in a printed magazine, encyclopedia or book?

- Are the articles short, unsubstantial, or otherwise lacking in helpful specifics?

- Are the pages produced with great care and attention to detail vs. less attention to detail?

- Would users complain when they see pages from this site?

Google won’t have the ability to automatically check for all the features of a Google “high-quality” site, I’d say Google currently (mid 2011) can’t achieve half the list above, but it tells us the direction Google is heading and the Panda algorithm update is a step in that direction.

I’ve bold the items above I think Google currently have the ability to rank for.

And from http://googleblog.blogspot.com/2011/02/finding-more-high-quality-sites-in.html

Finding more high-quality sites in search

2/24/2011

A change that noticeably impacts 11.8% of our queries—and we wanted to let people know what’s going on. This update is designed to reduce rankings for low-quality sites—sites which are low-value add for users, copy content from other websites or sites that are just not very useful. At the same time, it will provide better rankings for high-quality sites—sites with original content and information such as research, in-depth reports, thoughtful analysis and so on.

Duplicate Content

It’s all about quality, quality, quality. We’ve all found our way to the sites with a cookie cutter template along the lines of.

“Buy awesome products in NAME OF CITY at rock bottom prices”

Where thousands of pages on the site are pretty much identical other than the name of a city and a few other variables etc… These used to litter the Google index, not so much now.

Some perfectly good websites may fall foul of a Google algorithm update that’s trying to remove sites with low quality content: there’s always collateral damage.

Most sites use a template: header, main content, sidebars/menus, footer sort of layout which are duplicated on most pages of the site. This has the potential for Google to wrongly see a site as low quality if the pages tend to have a relatively small amount of unique content.

I don’t know where the ratio of duplicate content to unique content is that will result in Google seeing a set of pages as duplicate since the Panda update, lets for the sake of argument say it’s 50% duplicate content to 50% unique content is the tipping point (this is just a number for illustration).

If you always create large unique articles your pages are highly unlikely to look like duplicate content to Google even with every menu, footer content you can think to add, but if you are creating very short articles (equivalent to excerpts) your main content might be smaller than the content within the template and this might trip the Panda algo changes.

Look at low quality content on many websites, have you noticed their articles tend to be under 500 words each? That’s because a lot of the articles have been bought on places like DigitalPoint forums and the content sellers charge per word. Basically a 500 word article tends to be cheaper than a 2,000 word article (about the length of this SEO article).

Google is very good at ranking content that’s not part of the template while taking the template content into account (it is part of the page after all), so I wouldn’t worry too much about the template as long as you create reasonable sized quality content posts, what you loose in one area of a template you gain in another SEO way…

Reduce Duplicate Content with Silo SEO

SEO wise the perfect webpage would have ONLY content related to the article, what SEO’s call a silo SEO structure. With a silo SEO structure all links would point to other webpages related to the article and all template parts would support the article, but it has the potential to damage the other pages on the website, we can’t go full 100% silo SEO, all sections/pages of a site need links.

The Stallion Responsive WordPress SEO Package for example is very good at making template parts work to help rank the unique content, but because some of it is automated it can’t be 100% SEO perfect (SEO silos takes planning and manual actions). Ideally every link off this webpage would cover a keyword related to the main article keywords (Google Panda Update) and it can’t achieve this with automated sidebars etc…

Note: this website does use a SEO silo structure, see the silo SEO structure link above for details, it’s a balance between silo SEO, making sure the entire site is indexed and user experience.

If you did manage to achieve a 100% SILO SEO structure (I could achieve it with Stallion Responsive) it would mean my home page gets less backlinks, other posts on the site would get less backlinks and your visitors would find it almost impossible to navigate around the site easily! Each pages SEO is a compromise between perfect onpage SEO content for that page (a perfect SEO silo structure) and helping other pages on the site (via links) that might not be related to the articles content.

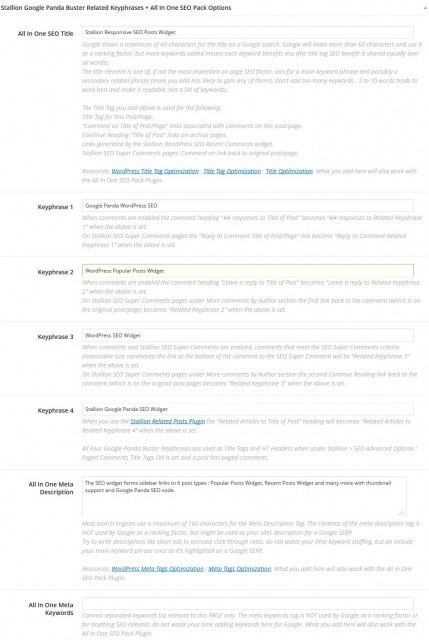

Below is a screenshot of some of the Google Panda Buster Options built into Stallion Responsive. The keyphrases are used by widget links in a way that means there’s less duplicate content sitewide (really cool SEO feature).

Question regarding quality content: if you compare two unique articles and one is 250 words in length and the other is 1,000 words in length, which do you think would have more user value?

It’s obvious the 1,000 word article is more likely to contain more information, article length alone tells Google nothing about actual quality, but then Google uses over 200 ranking factors some of which will indicate quality, so you tell me which without taking the others ranking factors into account which Google should rank higher?

You’ll note I write large articles (this one is close to 2,000 words) because they tend to rank higher.

Google Panda SEO Tips

If your articles tend to have a small amount of unique content with WordPress there are ways to generate relatively unique content on a page.

Related posts plugins for example adds extra content to WordPress posts and static pages based on keyword relevance.

Comments can help a lot, but it’s not always easy to generate comments on a page with a small amount of content.

Reduce duplicate content to a minimum, do you need the sitewide monthly archive widget links, the sitewide tags widget…? Less duplicate sitewide content = more unique content relatively speaking.

The best SEO technique is add more unique content, might sound obvious, but it works.

David Law

Google Panda SEO Advice

I have a site that has been hit during the Google Panda updates and went from #2 to #14 on the Big G.

I have thought about what happened and I wonder if you could comment on the following possibilities.

1. Forum profiles are no longer valuable or even harmful?

2. I have many sites hosted on the same server?

3. I let seolinkvine post articles to my site automatically, duplicate content?

4. There are a lot of backlinks on PR0 and PR1 sites?

5. Allowed content that was not related to the niche?

6. Someone else sabotaged my ranking somehow? Is this possible?

Those are the main ideas I have and would love to get some feedback before my other sites get hit.

Google Panda SEO Advice